Introduction

Penetration testing and ethical hacking is awesome and it’s all a TON of fun. Well, most of the time, when you’re not troubleshooting for hours on end or writing walls of text in a report or explaining why RDP open on the internet is maybe a bad idea.

It’s also extremely easy to go venturing down every rabbit hole you can find. Which means its easy to get distracted when something possibly critical is found during reconnaissance or enumeration.

What happens as a result? We go full speed ahead towards that thing, ignoring everything else, all other steps in our process, and all our obligations as penetration testers. Best case scenario we are left with an undocumented finding that we struggle to reproduce, with no communication to the client and no understanding of the real risk it presents. Worst case scenario we spend hours upon hours, unable to validate a finding and we’re unable to reproduce the steps we took to come to that conclusion. It’s a lose-lose.

There’s a better way. The following are 4 Common Pentesting Mistakes that I’ve made myself and seen others make. Hopefully, writing about my experience with these mistakes and how I go about solving them, helps you not make them yourself or to realize them and recover from them quickly.

4 Common Mistakes: Simplified

- Rushing – Force yourself to slow down by making sure you consider the real-world impact, communicate timely and appropriately, document thoroughly and report as you go.

- Focusing only on exploits – Take time to learn the business you’re pentesting and what’s valuable to them and contextualize that to the vulnerability, the exploit, and the findings.

- Poor communication – If it’s unclear if you should communicate, do so anyway.

- Bad documentation habits – Will you remember the syntax for that tool or the details of that obscure finding in a week or a month? Probably not. Write that stuff down.

4 Common Pentesting Mistakes and How to Avoid Them

All other mistakes I talk about in this blog post are compounded by abuse of this one mistake. That’s why I’ve put it at the top of this list. The order of importance for the other 3 mistakes is debatable. This one is not.

Mistake #1: Rushing

You’ve been in this situation before right? You find some really interesting information during your recon phase. You have a hunch that it will immediately lead to a critical finding and without batting an eye you dive right into it. Four hours later you come up for air and realize you:

- Didn’t take a single screenshot

- Didn’t document any of the commands or tools you ran

- Have so much tool output that you can’t actually remember what went where or why

- Didn’t record what it actually even is that you’re poking at or where to find it again should you have to take a break or quit for the day or heck, communicate it to a client

That’s a recipe for disaster. Not only does that make your job much harder than it has to be, it allows for easily avoidable mistakes, which can lead to bad test results, bad findings, bad reports, and unhappy bosses, peers, and/or clients.

Solution

The time to have the map is before you enter the woods. What that means is, be prepared. How does that translate to “solving” the rushing mistake? Before an engagement begins, make sure you’ve got your checklists and notes primed and ready to go. Create folders for tool output and screenshots. As indicated above, here are some things that help me to slow down and make sure I’m being thorough in my testing.

Take screenshots. During the engagement, make sure you take screenshots of key information and findings. Remember that your client or boss is going to be reading a report that’s mostly text. Many times it’s hard to convey something through text alone. Use screenshots to help tell that story. In doing so, you will naturally slow yourself down.

Document commands and capture output. Running nmap? Document the command you ran and be sure to -oA (save) the output. One of the most inefficient things you can do is to run an nmap scan, fail to record the command switches, and not preserve the output. Then a day later you remember a key piece of information from the output, but you didn’t save it. Now you have to search your history and run that scan again. Wasting time and making more noise (in the event you were trying to be quiet that could be a big deal).

Record your thought process. This is especially important when you haven’t fully executed an attack or you’re sure something is there but haven’t quite got to the pot of gold at the end of the rainbow yet. Write down what you’re poking at, why, and what your thought process is in doing so. Not only will it help you pick up where you left off after a break, but it will also help you convey the information to a client or boss or peer and it has the added benefit of helping you fully understand what it is you’re doing.

So the moral of this mistake is, to slow down and take your time. Document and capture screenshots to tell the story and record your thought process. Now onto the next mistake!

Mistake #2: Focusing only on exploits

One of our jobs, the primary job in fact, during pentests is to validate, demonstrate and articulate risk to the business. It’s cool to run an exploit you found on GitHub (after you’ve read the code, tested, and vetted it of course :O) A topic for another blog post), but what does that mean in terms of risk to the business?

Sure, maybe there’s a vulnerability that allows you to exploit some web app running on some server somewhere. What does that actually mean in terms of risk to the business? Is there sensitive data on the server? Does the access allow you to further penetrate the target or move laterally in accordance with the mission of the engagement? That’s not to say that there is no value in the finding, but we must also remember that we have to consider the impact of our exploits, not just look at the exploit and the vulnerability in a vacuum.

Solution

Understand the business. Solving this mistake takes us all the way back to our early interactions with the client, peer, or whoever is sponsoring the testing. How does the company make money? What data or process or technology is valuable to them. Once we know that, we will have a better idea of what we want to target in terms of simulating attacks.

Exploiting a critical point-of-sale system proving that credit card numbers can be pulled from live transactions is much more impactful than proving you can exploit an unrelated server with no PII, no privileged credentials, and no opportunity to move laterally.

This is an important topic to discuss during a kickoff call. Never assume you know what the crown jewels are. Have the client or the test sponsor tell you what those things are. Keep in mind, that this could be data, it could be a process, it could be a capability. Ask the question and listen carefully. Speaking of kick-off calls, this is a perfect segway to the next mistake!

Mistake #3: Poor communication

Find something strange? Unsure if a system is in scope? Found a vulnerability that’s extremely urgent? Want to attempt an exploit but unsure of its impact but know it would be high value for the client?

One of the worst things you can do is to go through an entire engagement and not speak to the client or test sponsor a single time. Not only are you leaving a perfect opportunity for relationship building on the table, but you also leave room for the person to be extremely surprised when you roll out a 50-page report full of critical findings, or the exact opposite.

I’ve learned that most of us security people don’t really like surprises, especially defenders.

Solution

The solution to this mistake is communication. Pick up the phone or send your contact an email and tell them you would like to hop on a call to discuss. Communicate often. Communicate clearly and as plainly as you can. Understand your audience. The person or group you are communicating with may not be as technically inclined as you and they require you to explain things to them with words and language they understand.

This is especially important during report debrief meetings, where there are many times a combination of technical and executive representatives. The phrase “explain it to me like I’m 5” is actually a pretty good strategy here. That’s not to say that executives are not smart or savvy or won’t understand the technical bits if you explained it to them. That’s just not their job. They are there to make business decisions, not evaluate the technical bits. You will end up wasting 10 minutes explaining something, for them to ask you to explain it again in a way they can understand.

Mistake #4: Bad documentation habits

This mistake manifests in a number of different ways. All of which have been mentioned already. No documentation or poorly written documentation, not reporting as you go, not taking screenshots or taking vague screenshots with no annotations.

The question I always come back to when thinking about this mistake is: If I have to go back to my notes a month from now will I be able to describe what I did, when I did it, etc. The answer is always, unequivocally, no. I can’t even remember what I had for lunch yesterday. What was I saying?

Solution

See the solutions to Mistake #1: Rushing. All of the same ideas covered there are applicable to this mistake. For this section, I will provide a few concrete examples of what I mean when I say, take good screenshots, document commands, and record your thought process.

Take good screenshots

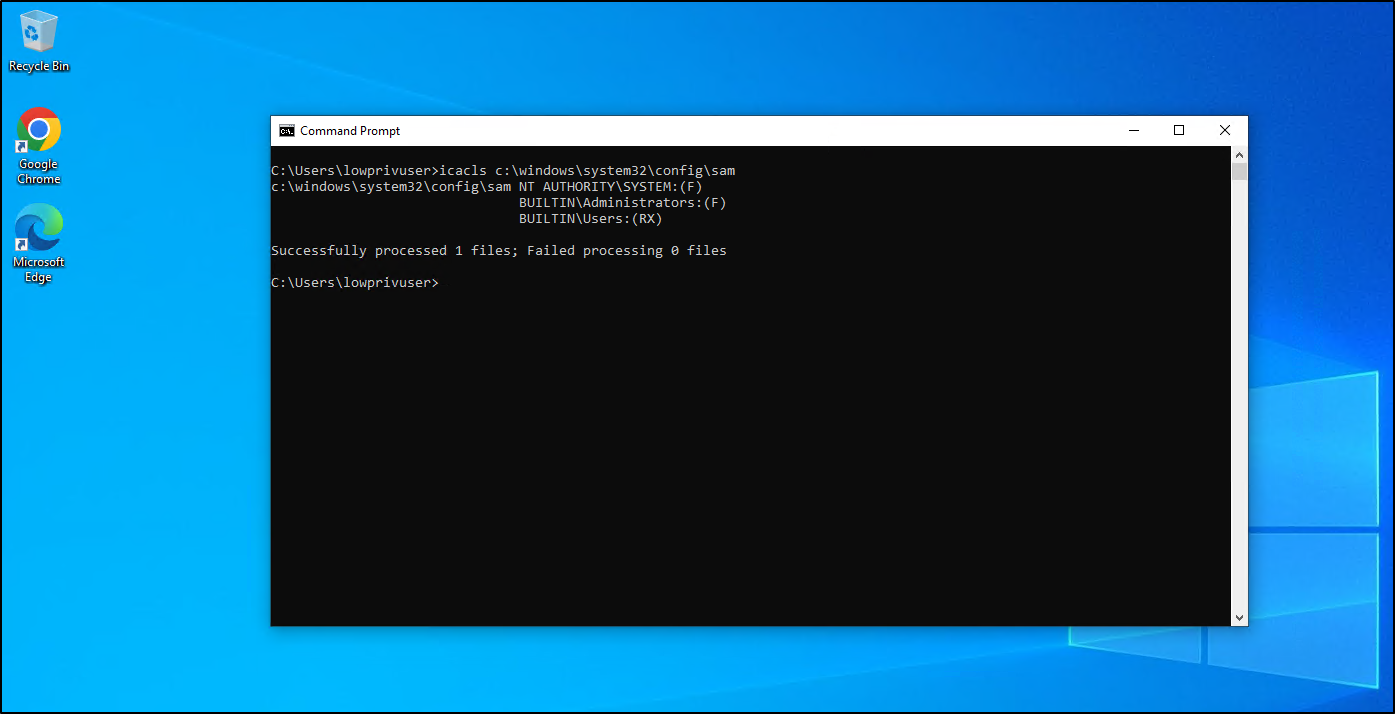

Non-technical people, please tell me what this screenshot is showing. How about what system this is showing? What about the user this command prompt is running as?

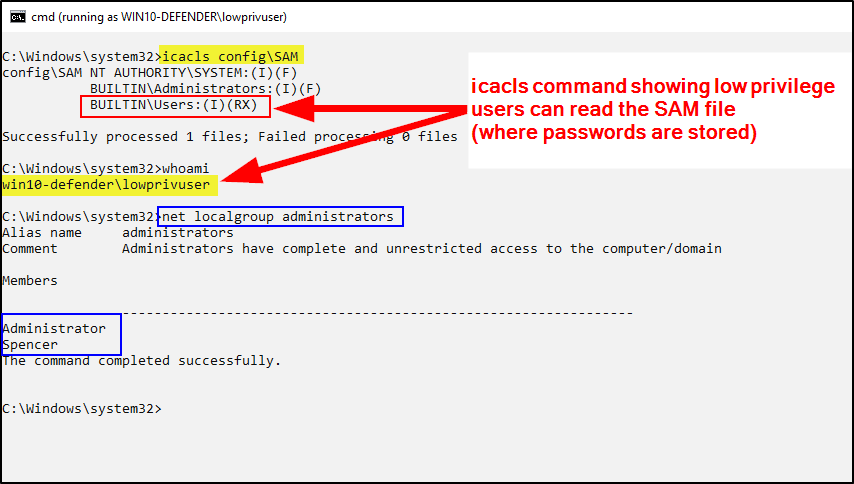

How about now?

You can immediately tell the differences between the two screenshots above. The first is hard to see and doesn’t call attention to anything specific. It’s unclear what this screenshot is showing. We are incorrectly assuming the reader is going to know or understand what we are showing.

The second screenshot is much more ideal. For one, the command terminal output is easier to read with black text on a white background. Second, there are boxes, arrows and highlighting indicating areas of importance. Third, there is a brief description of what the screenshot is displaying.

Document commands and capture output

I don’t know how many of you have photographic memories, but I certainly don’t. More than that, I often refer to my notes from previous engagements to find specific commands or tools I used. Is a centralized wiki a great idea? Yes absolutely. However, sometimes referring back to notes is just the best option. Additionally, when the client asks you questions about a specific finding or asks to re-test and validate a fix, you will have that information when you need it.

Sure some things are easy to remember, like nmap -sV -sC -oA nmap/initial 192.168.1.1. If you’ve watched any ippsec videos, that’s probably firmly cemented in your brain. Other commands may not be so easily recalled.

It’s important to not only document the commands used during engagements but also the output. This is maybe obvious for some, but this is your evidence. Capturing the output allows you to have less repeat work. For example, when running an nmap scan, save that output! Then you can refer back to the results whenever you need to instead of having to run the scan again and wait for it to finish.

Record your thought process

Admittedly, this is one of the hardest parts about documentation. Capturing commands and documenting scripts run during engagements is pretty straightforward. But writing down your thought process is a bit harder, albeit, just as important.

This again goes back to being able to articulate to your client or boss or whomever, the reasoning and decision-making that went into finding a specific vulnerability or pursuing some attack path. The added benefit is that again, if you go back to your notes a week, a month, a year from now, you’re much more likely to recall and reproduce those same steps you performed initially.

My recipe for this is to record: 1) what I was doing 2) to what 3) where and 4) why. Here’s an example that incorporates the screenshots above.

# Pentesting day 1 notes Testing ACLs on SAM/SECURITY/SYSTEM from lowprivuser on mydomain\test-pc, potential HiveNightmare > icacls c:\windows\system32\config\sam

These are more or less just cliff notes, but I know what they mean and I am leaving myself breadcrumbs to follow should I need them later on. Now I can refer back to my notes and understand very quickly what I was doing, on which system, and why.

Report as you go

One of the reasons to take good screenshots, document commands, capture the output and record your thought process is so that you can build all those things into the report. Screenshots and command output are evidence. Your thought process can be used to fill in the description and solution of a vulnerability. All of these things make your reports more comprehensive which helps make the end product, the report, better.

One of the benefits of reporting as you go is that you are not overloading yourself at the end of the testing window. In that regard, it makes reporting much more manageable. Furthermore, documenting and describing a vulnerability as you find it allows you to more accurately represent the finding in the report. If you only have a few cliff notes to go off of and then in a few days or a week when you go to write the report, you have a much higher chance of not remembering the specifics of the vulnerability. Thus, you end up not describing it as well as you could have and not deliver as good of an end product.

Closing

Security is fun and exciting, especially pentesting where you’re paid to hack, “break” things and create and use cool exploits. But we have to remember that we exist to help defenders defend. We exist to help businesses identify and mitigate risk, so they can continue to exist.

We have only scratched the surface on this topic. All of these mistakes could be their own blog post. And there of course numerous other common mistakes. I’m curious to hear your thoughts and additions to this list. What are some other common mistakes you’ve seen or done yourself?

If you enjoyed this blog post or if you got value from it, please do consider sharing it!